For example, Boolean values are unloaded as true or false, NULL values are unloaded as null, and timestamp values are unloaded as strings. In the JSON file, Amazon Redshift types are unloaded as the closest JSON representation. When using the JSON option with UNLOAD, Amazon Redshift unloads to a JSON file with each line containing a JSON object, representing a full record in the query result. Since UNLOAD processes and exports data in parallel from Amazon Redshift’s compute nodes to Amazon S3, this reduces the network overhead and thus time in reading large number of rows.

UNLOAD command is also recommended when you need to retrieve large result sets from your data warehouse. With the UNLOAD command, you can export a query result set in text, JSON, or Apache Parquet file format to Amazon S3. JSON support features in Amazon RedshiftĪmazon Redshift features such as COPY, UNLOAD, and Amazon Redshift Spectrum enable you to move and query data between your data warehouse and data lake. In this post, we discuss the UNLOAD feature in Amazon Redshift and how to export data from an Amazon Redshift cluster to JSON files on an Amazon S3 data lake. This allows you to make this data available to other analytics and machine learning applications rather than locking it in a silo. With a modern data architecture, you can store data in semi-structured format in your Amazon Simple Storage Service (Amazon S3) data lake and integrate it with structured data on Amazon Redshift. Amazon Redshift powers the modern data architecture, which enables you to query data across your data warehouse, data lake, and operational databases to gain faster and deeper insights not possible otherwise.

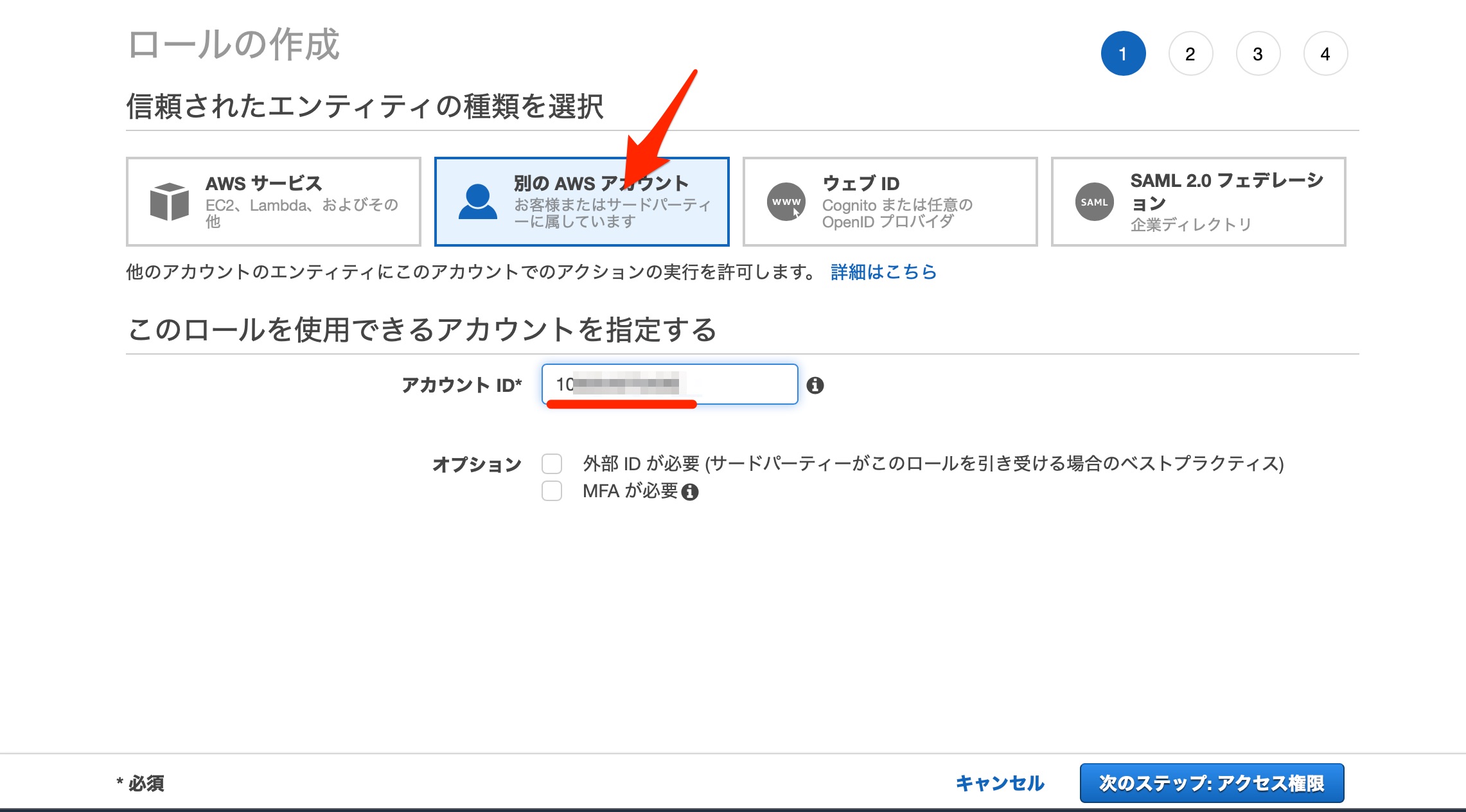

A vast amount of this data is available in semi-structured format and needs additional extract, transform, and load (ETL) processes to make it accessible or to integrate it with structured data for analysis. Tens of thousands of customers use Amazon Redshift to process exabytes of data per day and power analytics workloads such as high-performance business intelligence (BI) reporting, dashboarding applications, data exploration, and real-time analytics.Īs the amount of data generated by IoT devices, social media, and cloud applications continues to grow, organizations are looking to easily and cost-effectively analyze this data with minimal time-to-insight. Amazon Redshift offers up to three times better price performance than any other cloud data warehouse. As an additional safeguard, the key itself is also encrypted with a root key that is regularly rotated.Amazon Redshift is a fast, scalable, secure, and fully managed cloud data warehouse that makes it simple and cost-effective to analyze all your data using standard SQL. If you're using server-side encryption with S3-managed encryption keys, then your S3 bucket encrypts each of its objects with a unique key. If you receive a 403 Access Denied error from your S3 bucket, confirm that the proper permissions are granted for your S3 API operations: "Version": "", Verify that the IAM role assigned to the Amazon Redshift cluster is using the correct trust relationship.Verify that there are no trailing spaces in the IAM role used in the UNLOAD command.Verify that the IAM role is associated with your Amazon Redshift cluster.If the database user isn't authorized to assume the IAM role, then check the following: Iam_role 'arn:aws:iam::0123456789:role/redshift_role' Resolution DB user is not authorized to assume the AWS IAM Role error To 's3://testbucket/unload/test_unload_file1' This error might happen when you're trying to run the same UNLOAD command and unloading files in the same folder where data files are already present.įor example, you get this error if you run the following command twice: unload ('select * from test_unload') Consider using a different bucket / prefix, manually removing the target files in S3, or using the ALLOWOVERWRITE option. Specified unload destination on S3 is not empty ERROR: Specified unload destination on S3 is not empty. You can also specify whether a compressed gzip file should be filed. Unload the text data in either a delimited or fixed-width format (regardless of the data format used while being loaded). Note: Use the UNLOAD command with the SELECT statement when unloading data to your S3 bucket. When unloading data from your Amazon Redshift cluster to your Amazon S3 bucket, you might encounter the following errors:ĭB user is not authorized to assume the AWS Identity and Access Management (IAM) Role error error: User arn:aws:redshift:us-west-2::dbuser:/ is not authorized to assume IAM Role arn:aws:iam:::role/Ĥ03 Access Denied error (500310) Invalid operation: S3ServiceException:Access Denied,Status 403,Error AccessDenied,

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed